Separating the Voices of a Choir

My master's thesis is titled Score-informed Source Separation of Choral Music. In simple words, the research aims to 'unmix' recordings of choir music into individual recordings of each choir voice (soprano, alto, tenor, and bass).

To perform the separation, I used a machine learning technique called convolutional neural networks.

Separating choir music is difficult because choirs are very well-blended: in many cases even an expert human listener cannot differentiate between the voices. For this reason, I've introduced the musical score into the separation process: the neural network is conditioned on the pitch and timing information contained in the score to guide the separation.

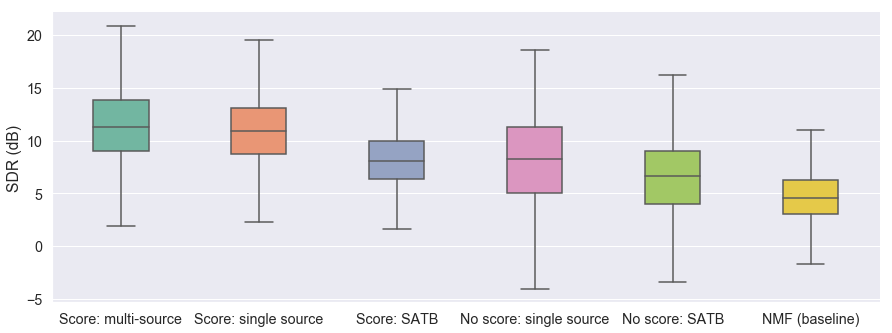

I ran multiple experiments to test three separation techniques: a score-informed NMF baseline, Wave-U-Net (a convolutional neural network), and score-informed Wave-U-Net (my own variant of Wave-U-Net incorporating the musical score).

Score-informed Wave-U-Net achieved the best separation results:

For more information, and to listen to audio examples, click here.

Download the full thesis (a short abstract is on the second page).

Code created for the thesis is released as open-source:

- score-informed-Wave-U-Net: a score-informed variant of Wave-U-Net for source separation

- score-informed-nmf: score-informed NMF (non-negative matrix factorization) implementation in Python

- synthesize-chorales: code used to create the synthesized Bach chorales dataset

- thesis-results-analysis: code used to analyze experiment results and generate figures

Sound Tracks

A game-based musical composition in collaboration with composer Ofer Pelz. The game is inspired by Guitar Hero, but the purpose is not to achieve a high score – instead, the game graphics serve as a musical score for a live performance.

The composer and the performers create the music during the live performance: the composer alters the game parameters to control the score, and the performers add their own interpretations in an improvised manner.

The piece was created for Ensemble Aka and performed in Montreal and New York.

MusicQuery

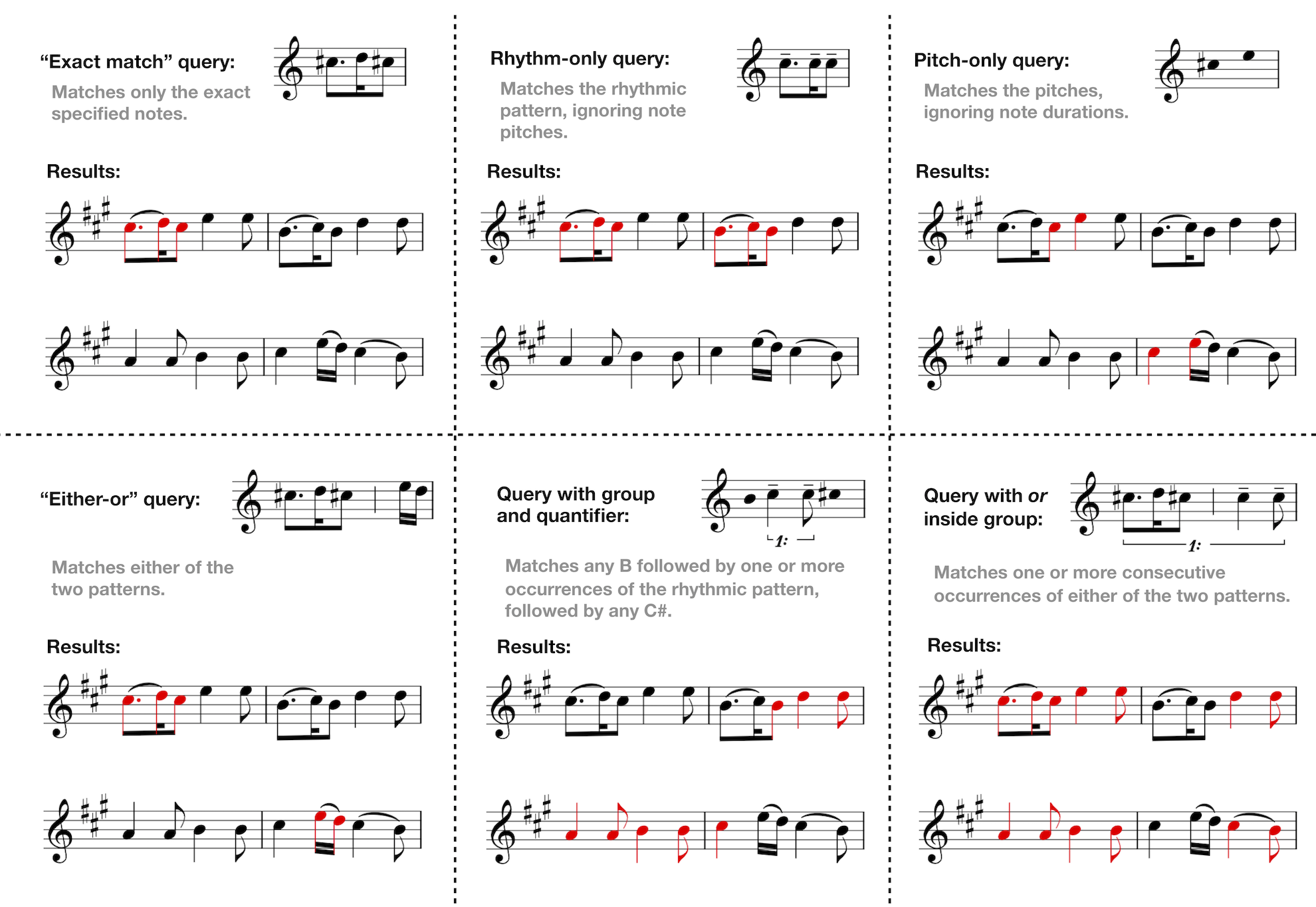

A graphical query language that I designed for finding patterns in symbolic music.

Sometimes musicians and researchers want to search for patterns in musical scores, just like one can search for text on the web. Existing tools for this task are often hard to use and require programming knowledge.

MusicQuery makes searching easier for musicians because it is based on standard music notation. While being simple to use, it allows searching for complex patterns. For example, you could search for 'at least two quarter notes followed by a C note followed by one or more G notes'.

View code on GitHub.

Query examples:

BEATogether

A software loop station that explores how two people can make music together using only their bodies as instruments. BEATogether connects skeleton tracking data from a Kinect camera to musical parameters in Ableton Live, and records multiple layers on top of each other to create a rich musical experience that engages performers to explore their interaction with each other and with the device.

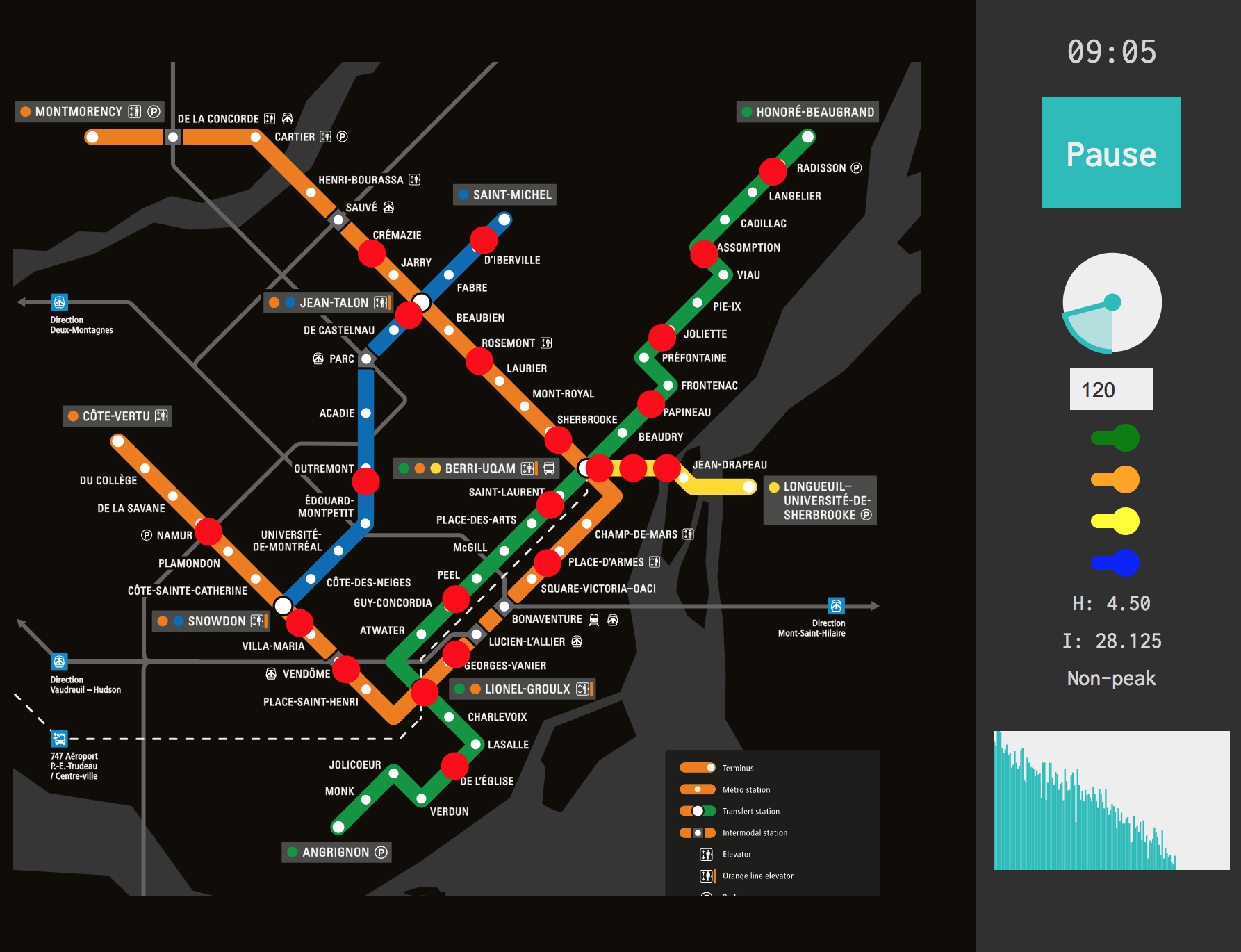

Metrosynth

An interactive sonification of public transport schedules. The Montreal metro system is run on a simulated timeline according to the real schedule. The metro system acts like a sequencer: when a metro train arrives at a station, it triggers a musical event assigned to that station.

Each metro line is modeled as a separate audio track. Each track uses a different synthesis method (FM and AM synthesis), and synthesis parameters are varied according to parameters such as the current time and peak/non-peak metro schedules.

Try it in the live demo (modern browser required).

Code and more details: metrosynth on GitHub.

Screenshot:

Dereverberation

A MATLAB implementation of a patented sound dereverberation algorithm proposed by Gilbert Soulodre in the following paper:

Soulodre, Gilbert A. 2010. “About this dereverberation business: A method for extracting reverberation from audio signals.” In Audio Engineering Society Convention 129. Audio Engineering Society.

Code and audio examples are available in the dereverberate repository on GitHub. I created this implementation for the course Digital Audio Signal Processing by Prof. Philippe Depalle at McGill University.

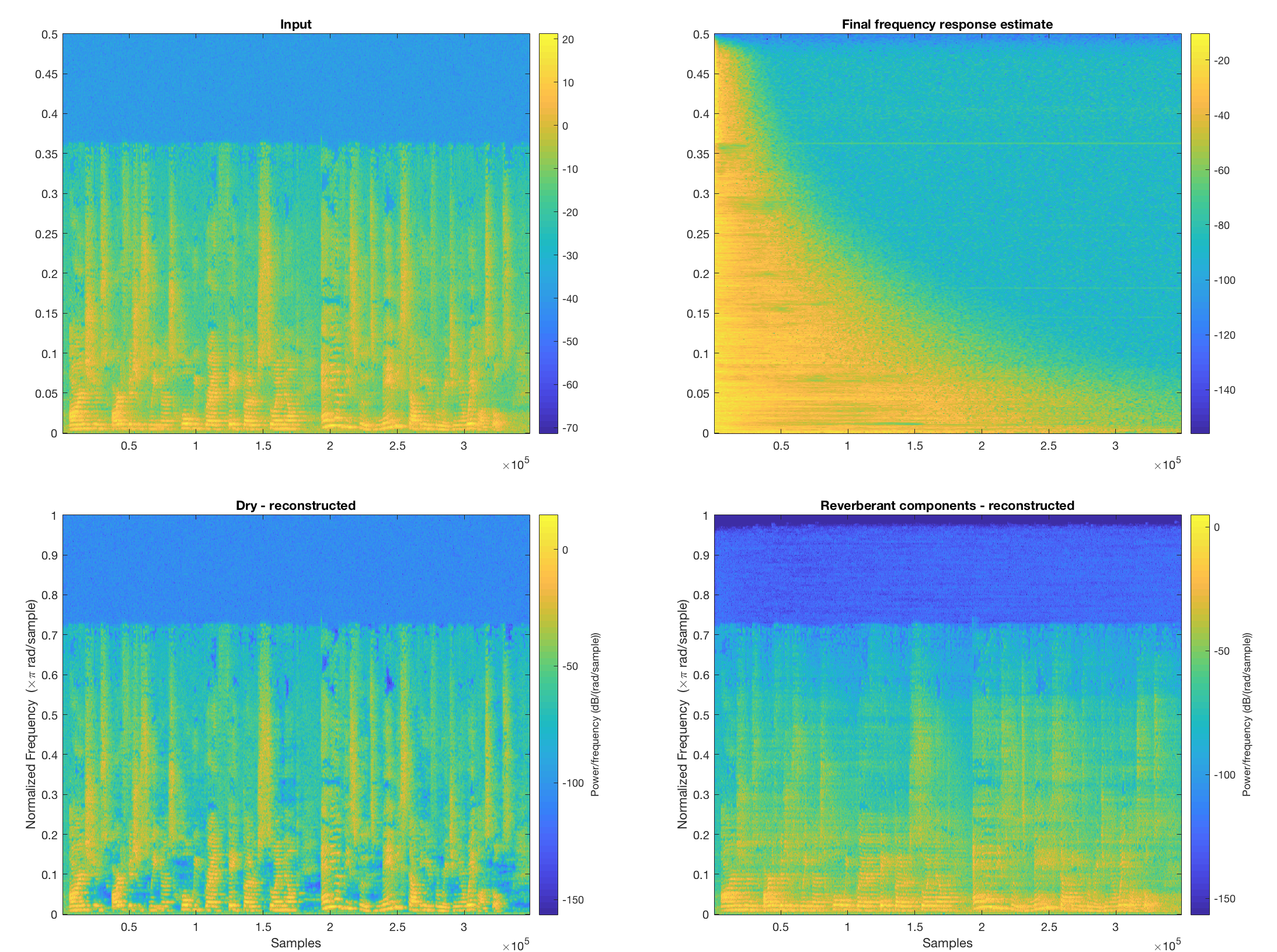

Here is a spectrogram of an example speech input and the algorithm's dereverberated results: